Local LLMs. Gemma 4 with Ollama

7 min read April 22, 2026 #llmRecently, Google released the open-source LLM model Gemma 4. Its E2B and E4B variants don't require powerful hardware, perform well in tests, and support tool calling. So let's install it locally and try it as a chatbot, image recognizer, and also connect it to Claude Code with a single command.

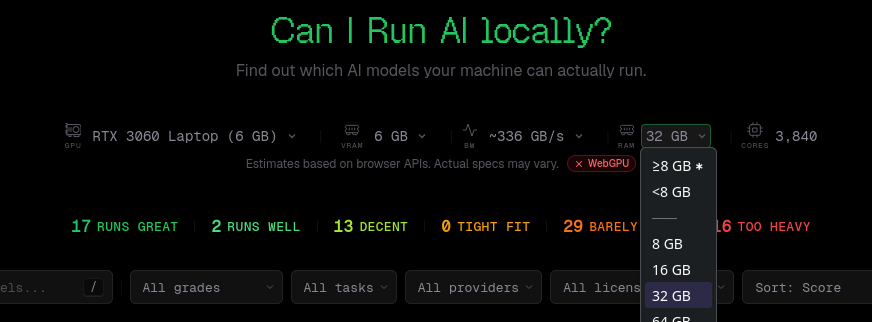

Can I run it?#

Before we start, let's figure out whether your hardware can handle this model. On the website https://www.canirun.ai/, select your hardware configuration and make sure to specify the amount of RAM.

I'm running Gemma 4 E4B on a laptop with an RTX 3060 Laptop GPU and 32 GB of RAM. It's not super fast, but fast enough that I don't have to make tea every time I wait for a response. And there's still plenty of RAM left for IDE and browser.

Ollama#

Install Ollama from the official website https://ollama.com/download or from your distribution's repository (if available).

You can run local models in LM Studio and llama.cpp as well, but Ollama makes it much easier to connect a local model to other applications, which we'll discuss later.

Depending on the platform and installation type, Ollama may install a CLI and GUI, or just the CLI. So I'll continue with CLI version.

> ollama --version

ollama version is 0.20.7Gemma 4#

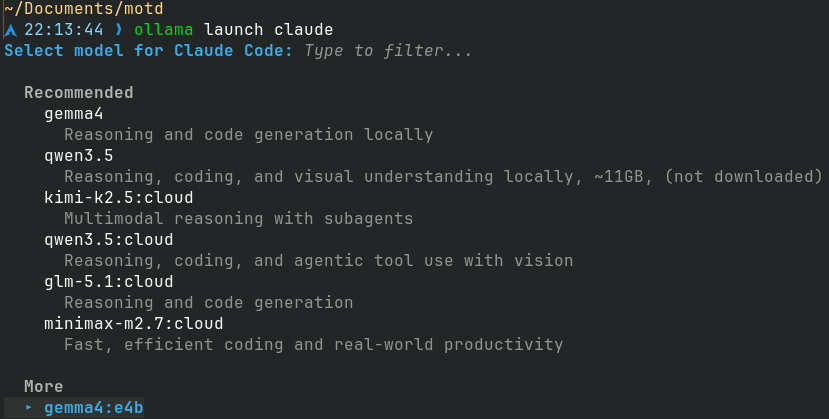

Ollama website has a page dedicated to the Gemma 4 model. It describes what the model is, its capabilities, integration options, and provides usage tips and specifications.

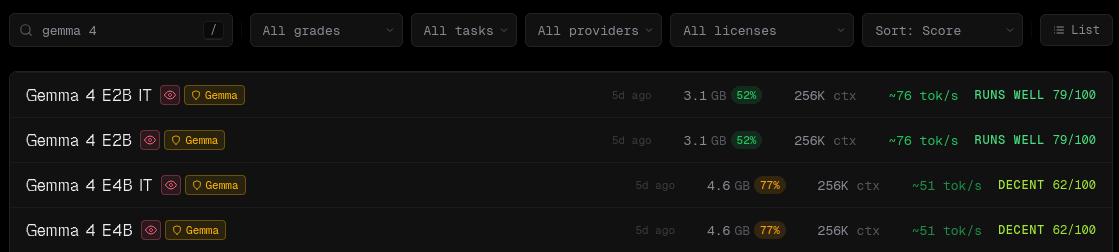

Install E4B if your hardware can handle it:

ollama run gemma4:e4bor E2B:

ollama run gemma4:e2bIf this is your first run, the model will start downloading. After the download finishes, a prompt will appear.

If it doesn't work, the server might not be running. Start it with the command ollama serve in a separate terminal tab.

You can already start asking questions. The model understands 140 languages, so you can write in languages other than English. Reasoning mode is enabled by default, it's done in English.

>>> Здароў, як справы?

Thinking...

Here's a thinking process to generate the appropriate response:

1. **Analyze the Input:** The input is "Здароў, як справы?" (Zdarov, yak spravy?).

* *Language:* Belarusian (or very close to it, sometimes confused with Russian, but the phrasing is clearly Belarusian).

* *Meaning:* "Zdarov" is an informal, friendly greeting (similar to "Hi/Hey"). "Як справы?" means "How are things?" or "How are you?"

* *Tone:* Very informal, friendly, casual.

2. **Determine the Goal:** ...

3. **Formulate Standard Responses (Belarusian) ...

4. **Draft Options (Selecting the best combination)** ...

* *Option 1 (Neutral/Standard):* Добра, дзякуй. А ў вас? (Good, thank you. And with you?) -> *This is safe, but slightly formal for the input tone.*

* *Option 2 (Casual/Friendly - Best fit):* Добра, дзякуй! Усё ў парадку. А ў цябе? (Good, thank you! Everything is fine. And with you?)

* *Option 3 (Very enthusiastic/quick):* Добра! А ў цябе? (Good! And with you?)

5. **Final Selection and Polish (Choosing Option 2 for completeness and warmth) ...

6. **Review:** Does this match the tone of "Здароў, як справы?" Yes, it's friendly, natural, and conversational. (This is the final output.)

...done thinking.

Добра, дзякуй! Усё ў парадку. 😄 А ў цябе? (А ў вас?)You can see the model considering different options, adjusting its tone, and settling on a final answer. To disable reasoning and increase response speed, type /set nothink:

>>> /set

Available Commands:

/set parameter ... Set a parameter

/set system <string> Set system message

/set history Enable history

/set nohistory Disable history

/set wordwrap Enable wordwrap

/set nowordwrap Disable wordwrap

/set format json Enable JSON mode

/set noformat Disable formatting

/set verbose Show LLM stats

/set quiet Disable LLM stats

/set think Enable thinking

/set nothink Disable thinking

>>> /set nothink

Set 'nothink' mode.>>> Ես հիանալի եմ։ Ի՞նչ ես անում։

Я — вялікая моўная мадэль, стратэгія, распрацаваная Google DeepMind.

Па сутнасці, я тут, каб да адказваць на пытанні, пісаць разным рэчывам, перакладаць мовы і да дапамагаць з разным па матэрыялах, што мне дадасць.

А што жэ я магу для цябе зрабіць сёння? 😊It understands Belarusian and Armenian, which makes it good for translations.

Image recognition#

You can add images by simply pasting the full file path. I tried it with simple screenshots containing text. It detects text perfectly in English, Japanese, and even when multiple languages are on the same screenshot.

Where the model failed was in determining exact coordinates. I took a screenshot of a webpage and asked it to find the coordinates of the sign in, sign up, and search buttons, and to output the answer as a JSON object with x and y fields. There was no problem with the format, but it couldn't provide accurate coordinates.

For a control test, I asked it to find the Like button on a screenshot of a YouTube page, and again it missed. Both with reasoning mode on and off. But when I asked it to tell how many likes were on the page, Gemma 4 easily found button, recognized in reasoning mode the video title, channel name, like button and its surroundings, then successfully returned the number of likes.

Conclusion: the model can understand what's in front of it, understands different languages, can translate text on the fly, but it won't help with positioning, so it's not suitable for web automation.

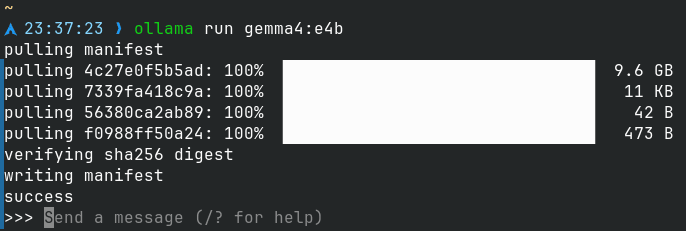

Claude Code / Codex / OpenCode#

Having a chat in Ollama is nice, but I also want to analyze files, edit code, and call tools with MCPs.

Important: For agents to work properly, increase the context size in the settings (in Ollama UI) or by setting the environment variable OLLAMA_CONTEXT_LENGTH and restarting the server:

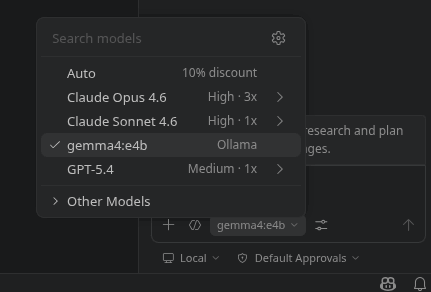

OLLAMA_CONTEXT_LENGTH=80000If you use Claude Code, Codex, OpenCode, or Pi, you can add a local model simply by running one of these:

ollama launch claude

ollama launch codex

ollama launch opencode

ollama launch piIf none of the above are installed, try Claude Code and Codex. The former is very convenient, and in the latter, tools sometimes work better with local models. What's most interesting is that you don't even need to log in to either of them. Install Claude Code, then run:

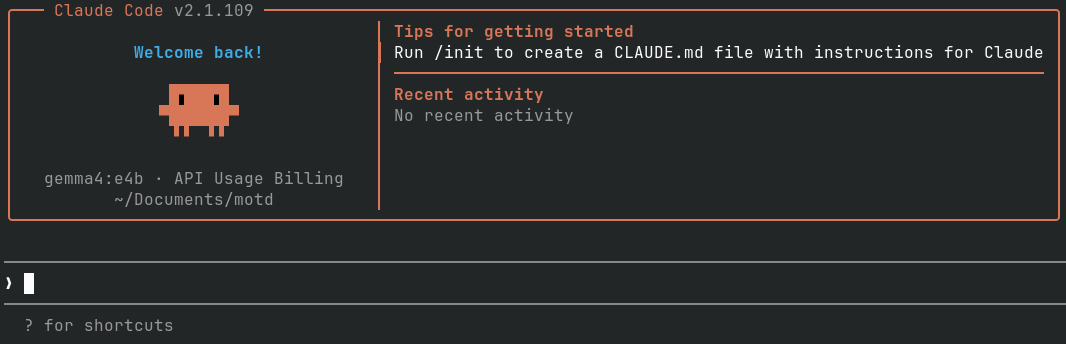

ollama launch claudeSelect Gemma 4:

And Claude Code greets you with the local model.

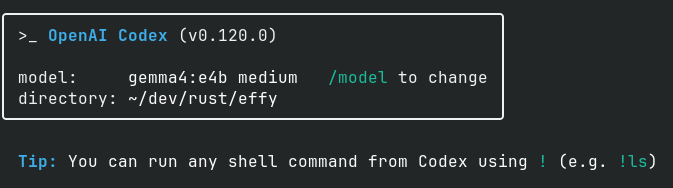

The same with Codex:

Agents#

It's interesting that the same model produces different results depending on the context added by Claude Code or Codex.

I installed both and ran them on the same clean Rust project (without any AGENTS.md), and gave them a couple of tasks.

What version is the project currently on?

In Claude:

Gemma 4 called git tag and determined that the version is 0.9.0.

In Codex:

The model read the Cargo.toml file and determined that the project version is 0.9.0.

I think reading Cargo.toml is more correct in this case, because git tags might not exist or could contain other data.

Second task: count the number of tests in the project. To make it harder, the project contained an obvious error and did not compile:

match (sx, sy, sw, sh) {

(, Some(yy), Some(ww), Some(hh)) => {

let x = xx.parse::<u32>().unwrap();

let y = yy.parse::<u32>().unwrap();Check the project and tell how many tests it have

In Claude:

Gemma tried to find test.rs, then tests/**/*.rs, then the string #[test] in the src folder (recursively), found nothing, and then looked into *.md files. It then concluded that it found nothing. Not a word about the compilation error, not a single attempt to run cargo test.

Time taken: 1m 29s.

Then I nudged it: "Maybe run cargo test or something?". It ran it, saw the error, and asked if it should fix it. After confirmation, it tried to fix it like this:

match (sx, sy, sw, sh) {

(_, Some(yy), Some(ww), Some(hh)) => {

let x = xx.parse::<u32>().unwrap();It updated the file, ran cargo test again, got another error, and started coming up with truly horrible solutions, like rewriting the match as if.

In Codex:

Gemma looked at what it was dealing with (ls -R), realized it was a Rust project, called cargo test, which failed with a compilation error. Then the model tried simply searching for #[test] but found nothing either. In the end, it said there was an error on a certain line and because of that, it couldn't give the exact number of tests. It asked for permission to fix it.

It searched through the code a bit and gave the correct answer:

I generated a patch to fix this by changing the pattern match from

(, Some(yy), Some(ww), Some(hh))to(Some(xx), Some(yy), Some(ww), Some(hh))

But applying the patch failed, likely due to tool incompatibility. After I fixed the code, Gemma ran cargo test and counted the number of tests. Total time: about 5 minutes.

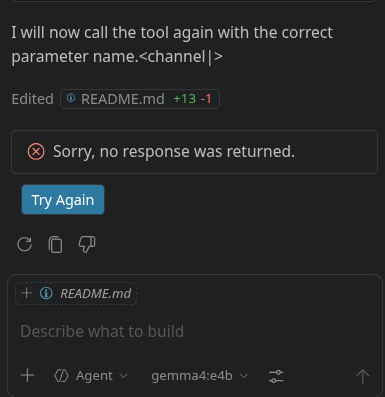

In general, if some tool doesn't work, it could be an Ollama issue. For example, Gemma 4 wouldn't call any tools in Codex at all, but that was later fixed.

Conclusion: this model is capable of performing tasks, generating simple scripts or small pieces of code. Anything more complex will require several attempts or gentle nudges toward solving the problem.

VS Code#

Local models can also be connected to code editors. Zed, VS Code, and others.

ollama launch vscode

Here it's the same as with Claude / Codex: simple analysis and chat work well, but some tools have issues.

However, if you click Try again or Retry, it might still work. I asked it t write tests for a small Python script and t e model successfully generated and wrote a 120 line file, suggested updating README.md, and added a section about how to run the tests. There were 3 errors during this process.

Conclusion#

The model is good as a daily assistant for various tasks and, I think, for orchestrating other agents. For coding, it's still better to look for a more stable model. Also, E2B and E4B models understand audio in WAV format. But commands in languages other than English are recognized with difficulty: either Ollama crashes due to lack of memory (especially on recordings longer than 30 seconds), or hallucinations occur.