Local LLMs. OmniCoder-9B

4 min read May 13, 2026 #llmContinuing to explore the topic of local models, I came across a well-balanced option: OmniCoder-9B. I tested OmniCoder for a whole week on various tasks, and it handled all of them decently. Unlike Gemma 4 and Qwen, once I connected this model to Codex/Claude, there were no issues with tool calling at all.

Download the model in Ollama

ollama pull carstenuhlig/omnicoder-9bThe model takes up 5.7 GB, even less than Qwen3.5:9b (6.6 GB). However, unlike Gemma 4 e4b, which has 4 billion parameters, OmniCoder-9B has 9 billion parameters, so keep in mind that the model will be more memory-intensive. I run it on a laptop with an RTX 3060 Laptop GPU (6GB VRAM) and 32 GB RAM. If you have more powerful hardware, there shouldn't be any issues.

Agents#

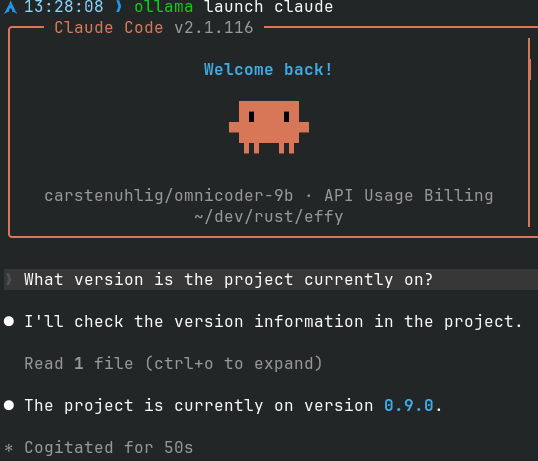

Let's start with the same task as with Gemma 4: determining the current version of a Rust project.

What version is the project currently on?

After reading Cargo.toml, the model identified the current project version.

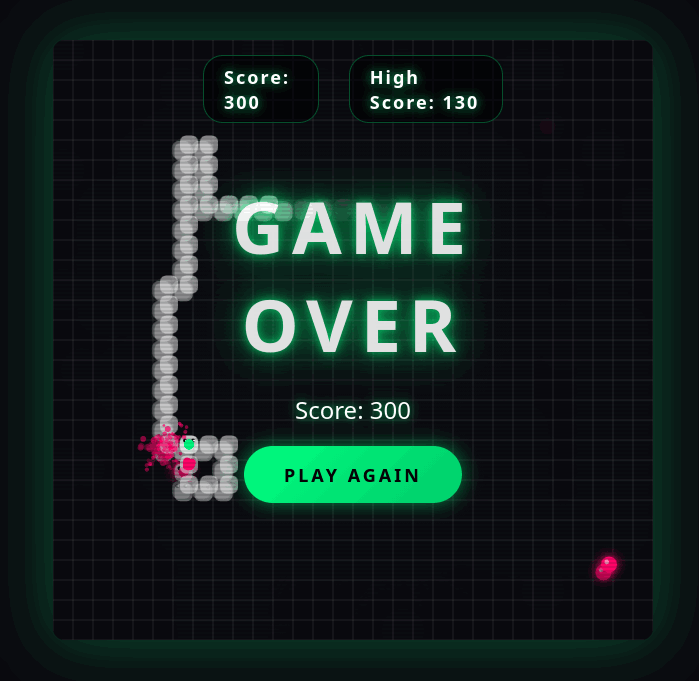

Snake Game in HTML5#

Now onto coding. Let's increase the context window and launch Claude.

OLLAMA_CONTEXT_LENGTH=80000

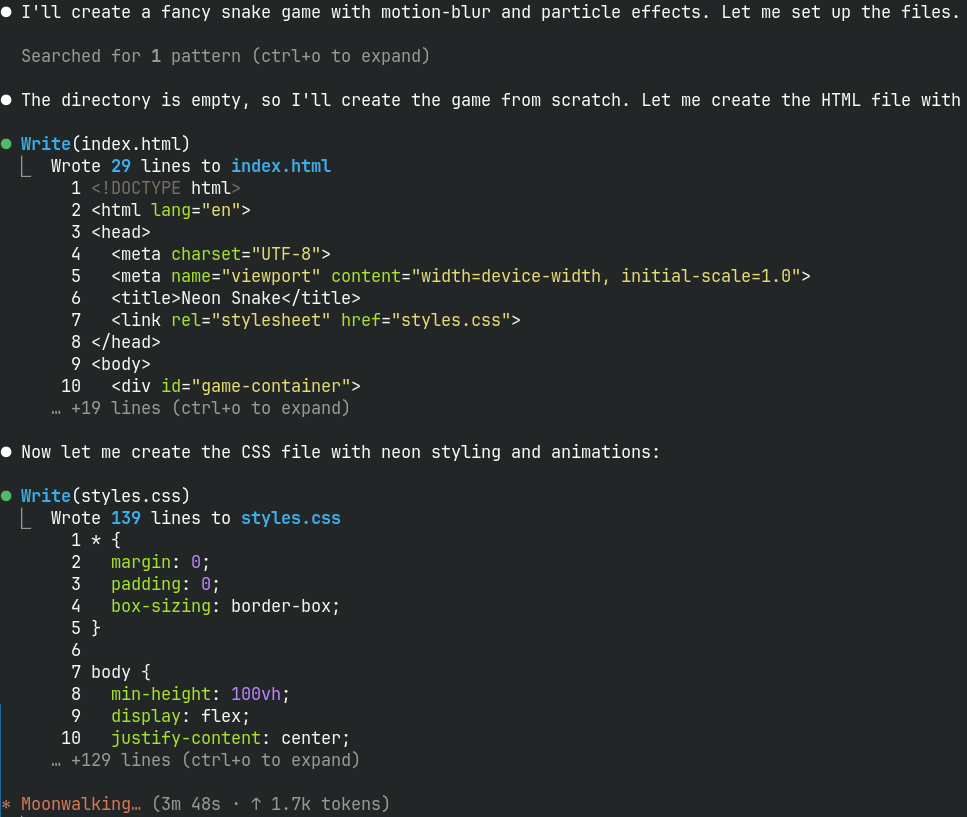

ollama launch claudeGenerate a fancy snake game in HTML5. Use interesting effects such as motion-blur and particles. Keep css/js files separately from html.

It took 3.5 minutes to create HTML and CSS files. And another 3.5 for JS:

● I've created a fancy neon snake game with the following features:

Visual Effects: • Motion blur trail - The snake leaves a fading trail effect • Particle explosions - Colorful particles burst when eating food or dying • Screen shake - Intense shake on collisions and food consumption • Glow effects - Neon glow around the snake head and pulsing food • CRT scanline overlay - Retro screen effect

Game Features: • Score tracking with high score (saved to localStorage) • Progressive speed increase as you eat • Smooth WASD/Arrow key controls • Pulsing food with highlight effect • Gradient coloring on snake body

Files created: • index.html - Main game page with start/game over screens • styles.css - Neon styling with glow effects and CRT overlay • game.js - Snake logic, particles, and collision handling

Controls: Arrow keys or WASD to move. The food pulses and has a glowing ring effect. When you eat food, particles explode and the screen shakes. Game over triggers a larger explosion.

✻ Cogitated for 7m 3s

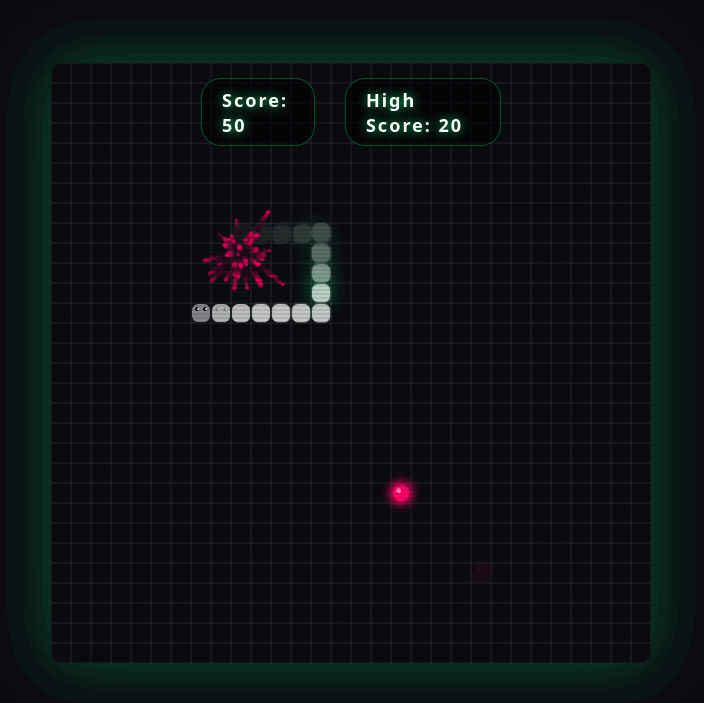

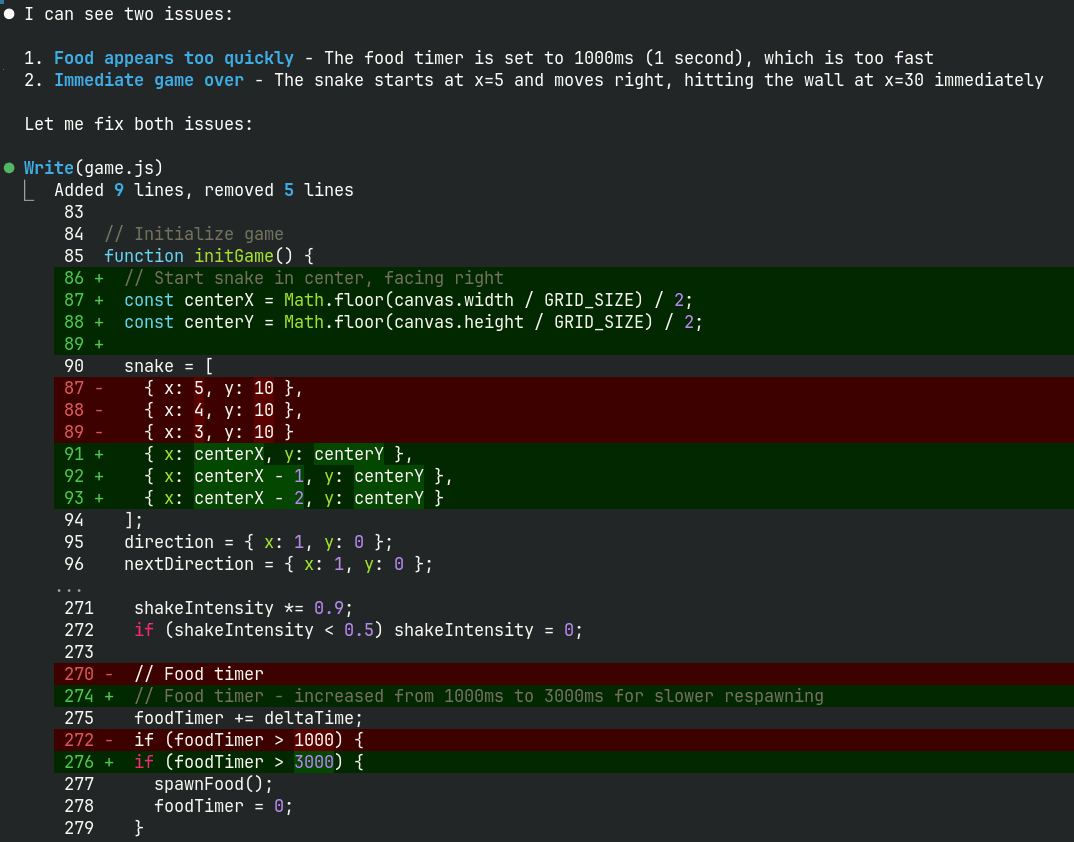

The first version was already functional, except that the snake initially faced into itself, so you had to quickly press down or up to avoid losing. Also, the food moved to a new location every second, making it difficult to catch. On the other hand, the logic, the eaten food breaking into particles, collisions, camera shake - all worked perfectly.

Result:

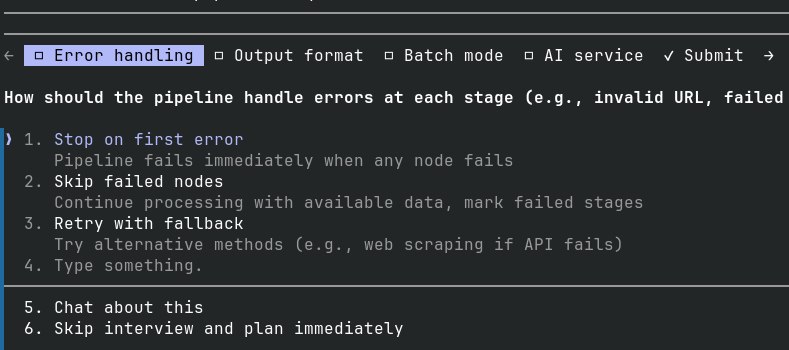

Planning Mode#

In Claude's planning mode, I asked to create a Python pipeline for processing links: validation, metadata extraction, sending text to TTS, generating a short article summary, etc.

OmniCoder generated a reasonable plan with nodes like these:

┌─────────┐

│ URL │ ──→ validate & detect type (regex)

└─────────┘

↓

┌─────────────────────────┐

│ Type Router │

│ ├─ YouTube URL → yt-dlp │

│ └─ Article URL → fetch │

└─────────────────────────┘

↓

┌────────────────────────────┐

│ Node 1: Metadata Extractor │

│ (yt-dlp metadata + content)│

│ ↓ saves to temp file │

└────────────────────────────┘

↓

┌───────────────────────┐

│ Node 2: Summarizer │

│ (Ollama: summary) │

│ ↓ reads temp file │

│ ↓ saves to temp file │

└───────────────────────┘

↓

┌──────────────────────┐

│ Node 3: Mindmap │

│ (Ollama: mindmap) │

│ ↓ reads temp file │

│ ↓ saves to temp file │

└──────────────────────┘

↓

┌───────────────────────┐

│ Node 4: TTS Generator │

│ (Ollama: tts) │

│ ↓ reads temp file │

│ ↓ saves to temp file │

└───────────────────────┘

↓

┌───────────────────────────┐

│ Node 5: Markdown Renderer │

│ (assembles all outputs) │

└───────────────────────────┘It planned the input and output parameters for each node, and the file structure:

summarize/

├── summarize.py # Main CLI entry point

├── nodes/ # Node implementations

│ ├── base.py # Abstract base class for nodes

│ ├── router.py # URL type detection & routing

│ ├── metadata.py # yt-dlp extraction

│ ├── summarizer.py # Ollama summary

│ ├── mindmap.py # Ollama mindmap

│ ├── tts.py # TTS

│ └── renderer.py # Markdown assembly

└── requirements.txt # DependenciesFull plan: now-we-need-to-velvet-creek.txt

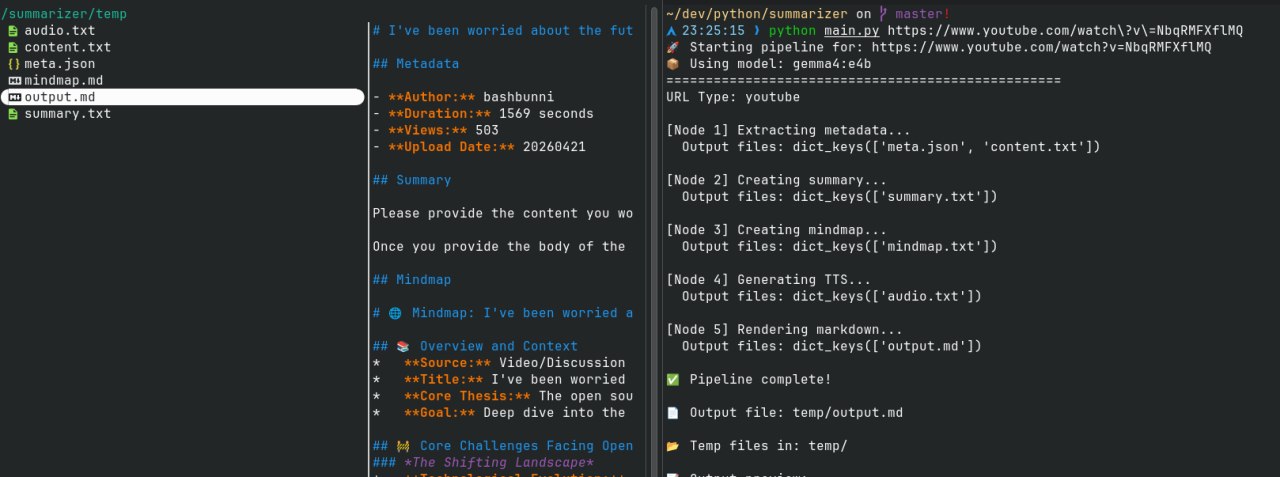

Then it took about 10 minutes to implement everything. The result contained only one error, but it wasn't in the Python code, but rather in the regular expression for extracting YouTube video ids. The model forgot to mark a capture group, even though it later used that group in the code.

YOUTUBE_PATTERNS = [

r'https?://www\.youtube\.com/watch\?v=[\w_-]+',

r'https?://youtu\.be/[\w_-]+',

r'https?://m\.youtube\.com/watch\?v=[\w_-]+',

]

for pattern in self.YOUTUBE_PATTERNS:

match = re.(,)

if match:

if 'youtu.be/' in url:

return url.('youtu.be/')[1].('?')[0].('/')[0]

return match.(1) if match.(1) else match.(0).('v=')[1].('&')[0]Additionally, in the Ollama nodes, it set the default model to something other than Gemma 4. Still, the result is very decent, considering that all this code was written in a single iteration.

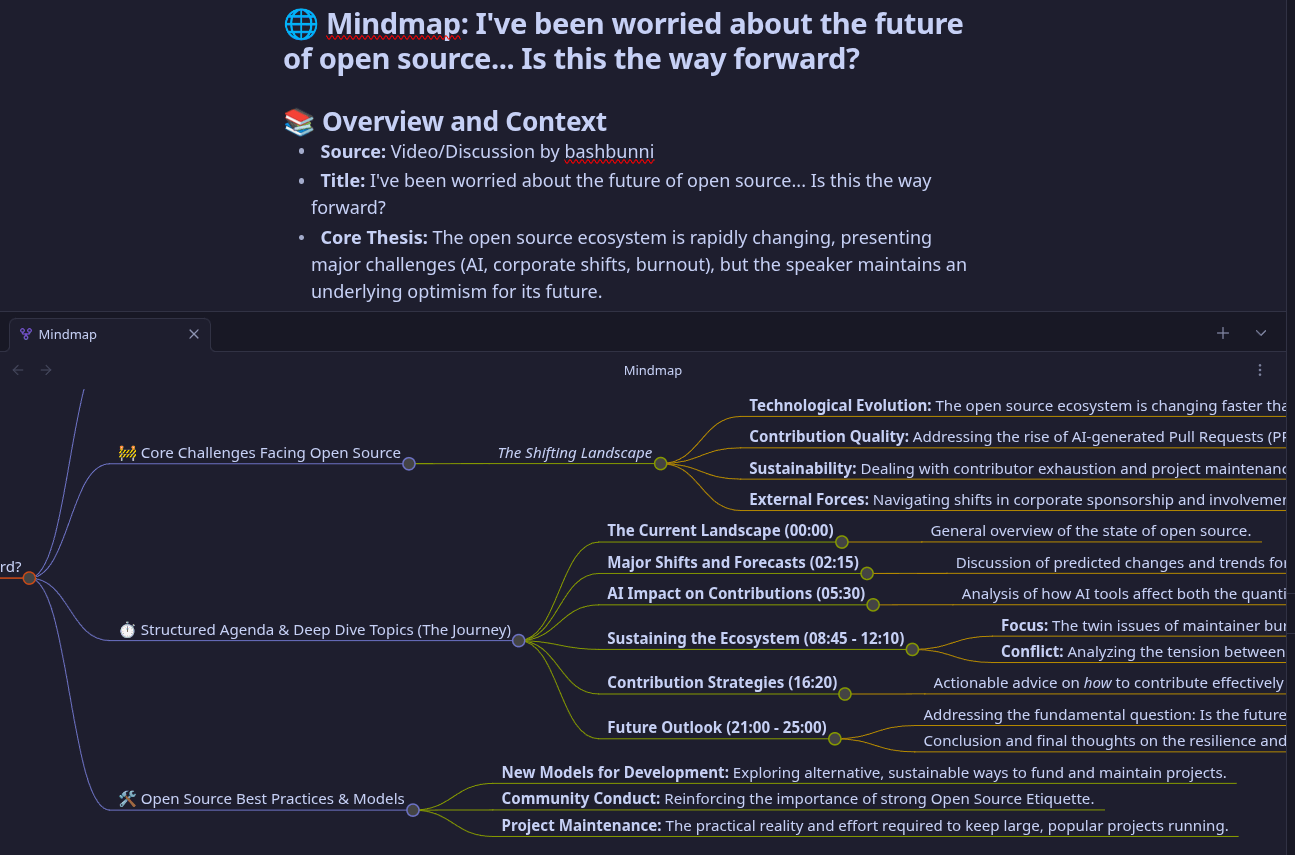

Here's the finished application in action. The local Gemma 4 handled the summary and mindmap.

Adding functionality to a Java project#

Let's test Java integration by adding a new FFmpeg parameter to https://github.com/aNNiMON/effybot

Add audio noise reduction parameter (afftdn) with noise reduction -40, -20, -10, -5, -2 dB.

OmniCoder studied the project, understood the organization of existing parameters, and generated a class:

package com.annimon.effybot.parameters;

import com.annimon.effybot.commands.ffmpeg.Visitor;

import java.util.List;

public class AudioNoiseReduction extends StringParameter {

public static final String ID = "anoreduce";

private static final List<String> VALUES = List.of(

"", "5", "12", "20", "30", "40"

);

public AudioNoiseReduction() {

super(ID, "🔈 Noise Reduction", VALUES, "");

}

@Override

public String describeValue(String value) {

if (value.isEmpty()) {

return "NONE";

} else {

return super.describeValue(value);

}

}

@Override

public int defaultColumnsCount() {

return 4;

}

@Override

public <I> void accept(Visitor<I> visitor, I input) {

visitor.visit(this, input);

}

}Then added its handling in the Visitor interface:

intterface Visitor {

// ...

void visit(AudioNoiseReduction I);

}and in its implementation FFmpegCommandBuilder:

@Override

public void visit(AudioNoiseReduction MediaSession) {

if (discardAudio) return;

if (p.getValue().isEmpty()) return

audioFilters.add("afftdn=nr=" + p.getValue());

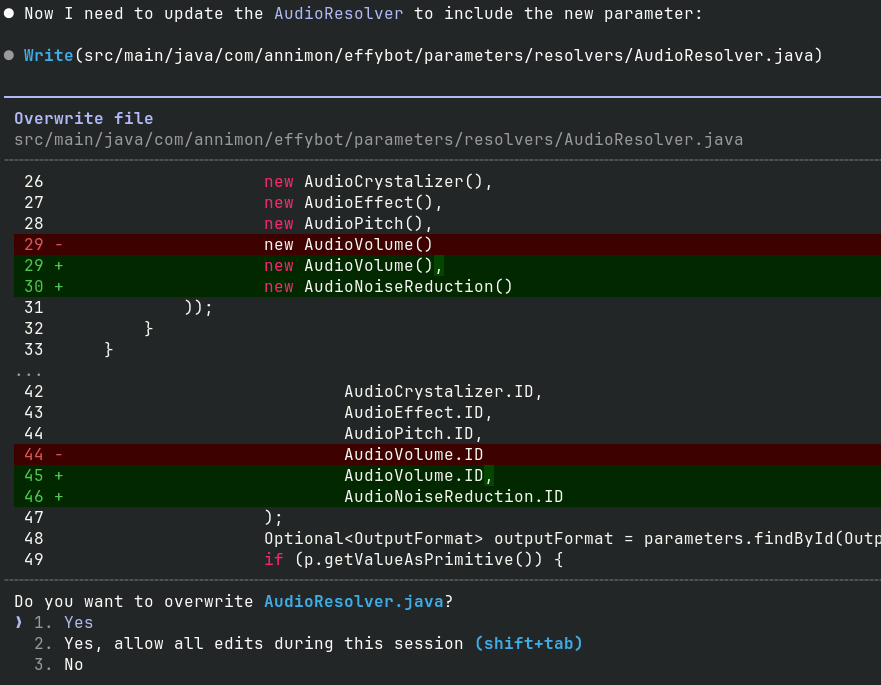

}It also added the creation of AudioNoiseReduction in AudioResolver where all audio parameters are created.

Although the task isn't very complex, the model definitely succeeded on the first attempt, taking about 7 minutes in total.

Conclusion#

I'm very satisfied with this model. It's relatively compact and offers acceptable speed. For everyday tasks like making a short summary, the model isn't very suitable. However, it works great with tools and different programming languages.